A regulator walks into your office — or, more precisely, sends a formal request — and asks you to reproduce a credit decision made six months ago. Same input, same reasoning, same output. Exactly.

Your team goes back to the system. The model is there. The logs are there. But the model has been updated twice since then. The reasoning path isn't reproducible. The output may differ. There is no deterministic trail.

That's not a logging problem. It's an architecture problem. And it starts with where the LLM was placed in the decision chain.

The Core Incompatibility

Large language models are probabilistic by design. Given the same input, they don't guarantee the same output. Temperature, model version, sampling strategy, context window — all of these introduce variance. That variance is often a feature: it's what makes LLMs useful for analysis, synthesis, and exploration.

In regulated financial systems, that same variance is a liability.

Credit decisions, fraud flags, AML alerts, loan approvals — these are outcomes that must be reproducible, explainable, and auditable. Regulators don't ask for a reasonable approximation of what the system decided. They ask for the exact decision, the exact reasoning, and the exact rule that produced it. A system built on a probabilistic layer cannot satisfy that requirement by design, regardless of how good the model is.

This isn't a temporary limitation waiting to be solved by the next model release. It's a fundamental mismatch between how LLMs work and what regulated decision systems require.

The Architecture That Actually Works

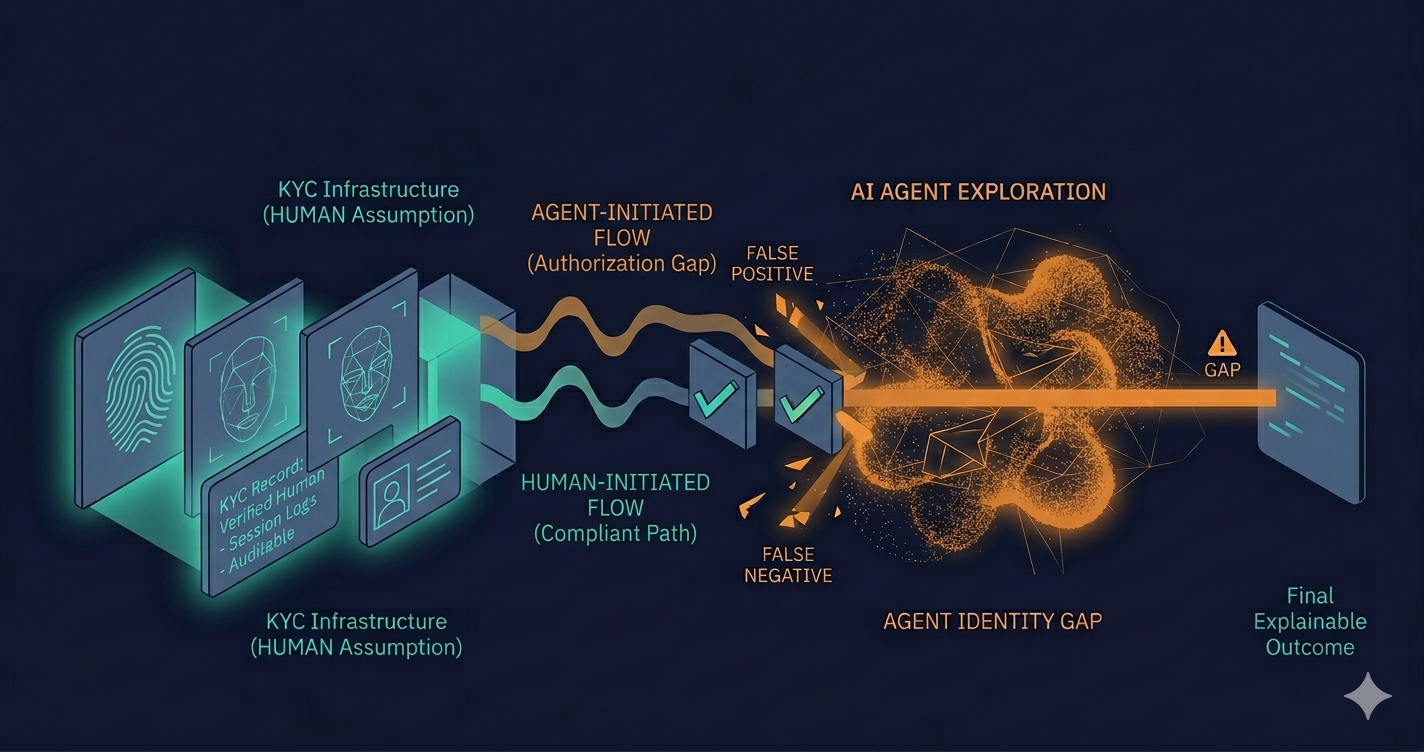

The pattern that practitioners are converging on separates the system into two distinct layers, each with a clearly defined role.

The LLM as the analysis layer. The model does what it does well: it reads documents, flags anomalies, summarizes context, surfaces relevant signals, generates a structured proposal. It operates as a preprocessing component — powerful, flexible, context-aware. But it does not produce the binding outcome. It produces input for the layer that will.

The deterministic policy engine as the decision authority. A separate, versioned system applies fixed rules to the proposal generated by the LLM and produces the binding outcome. This engine is auditable by design: the same input always produces the same output, the rules are explicit and versionable, and every decision can be reproduced exactly — six months later, in front of a regulator, with full traceability.

The key principle: the LLM explores, analyzes, and proposes. The policy engine decides. Those two roles require different systems and different accountability structures.

What This Looks Like in Practice

A lending platform using this architecture might work like this: a loan application comes in, the LLM processes the applicant's documents, cross-references relevant signals, and produces a structured risk assessment with recommended parameters. That assessment goes into the policy engine, which applies versioned credit rules, produces an approval, denial, or conditional outcome, and logs the exact rule version and inputs that produced it.

The LLM's output is traceable but not the authority. The policy engine's output is both traceable and binding. If a regulator asks about that decision in six months, the policy engine can reproduce it exactly — independent of whatever has happened to the model since.

The same pattern applies to fraud detection, AML alerting, and any other compliance-adjacent workflow where decisions must survive regulatory scrutiny.

Why Teams Get This Wrong

The most common failure mode isn't ignorance — it's timeline pressure combined with a vendor landscape that makes the wrong architecture feel like the right one.

AI vendors demo their models producing decisions. The demo is clean, the output looks right, and the path of least resistance is to wire the model directly into the workflow. Nobody in that demo asks what happens when the model is updated, or how you reproduce a decision from a prior version, or what the audit trail looks like when a regulator asks.

The engineering team implementing the system often lacks the regulatory context to know that those questions matter. The compliance team often lacks the technical fluency to enforce the architectural requirement at sprint level. The result is a system that works in production — until the first regulatory review, when the absence of a deterministic layer becomes visible in the worst possible way.

This is one of the cleaner examples of how risk debt accumulates in fintech: each individual decision to defer the policy engine feels reasonable at the time. The cost surfaces when the audit arrives.

Who Should Own This Decision

Here's the tension that most fintech teams haven't resolved: the LLM/policy engine separation is simultaneously an engineering architecture decision, a compliance requirement, and a product design constraint. It sits at the intersection of all three, which means it tends to fall in the gap between them.

The CTO is focused on shipping. The compliance officer isn't fluent enough in model architecture to mandate the separation explicitly. Product is optimizing for features. Nobody is watching the boundary between the probabilistic layer and the decision authority — until someone has to.

Teams operating in regulated environments, building AI into compliance and risk workflows, are discovering that this boundary needs an owner. Not a committee. A specific person or team that understands both what the model can do and what the regulation requires — and has the authority to make the architecture reflect both.

Getting from AI pilot to production in a regulated fintech environment isn't primarily a modeling problem. It's an infrastructure and ownership problem. The policy engine is part of that infrastructure. So is deciding who is responsible for keeping it correct as the rules change.

A Practical Starting Point

If your team has already deployed an LLM into a decision workflow without a deterministic layer underneath it, the path forward isn't a rewrite. It's an incremental separation: identify the binding decision points in the current workflow, extract them from the model's output path, and route them through a versioned policy layer — one decision type at a time.

The model doesn't need to change. The architecture around it does. That work sits squarely in the gap between engineering and compliance. Which is exactly where the most expensive fintech problems tend to live.

Kreitech works with fintech teams building and operating systems in regulated environments. We help teams bridge the gap between engineering decisions and compliance requirements — including the architecture decisions that don't fit cleanly in either domain. Explore how we deliver inside these constraints.