The KYC flow your team built works. It handles document verification, liveness checks, beneficial ownership mapping. It scales reasonably well. It satisfies the auditor.

It was also designed with an assumption so fundamental that nobody wrote it down: there is a human on the other side.

That assumption is about to become a problem.

The Assumption Nobody Documented

KYC infrastructure was built around a specific model of interaction. A person presents credentials. The system verifies them. A decision is made about whether that person can proceed.

Every piece of that flow — the document checks, the biometric validation, the behavioral signals used to detect fraud — was designed to evaluate a human actor. Not because engineers made a conscious choice to exclude non-human actors. Because in 2018, or 2020, or even 2022, the idea of an AI agent initiating a regulated financial transaction wasn't a real engineering constraint. It was a thought experiment.

It isn't anymore.

Fintech teams are deploying AI agents that initiate payments, trigger credit applications, execute treasury operations. Those agents hit the same KYC stack that was built for humans. And the stack doesn't know what to do with them.

Three Ways This Breaks in Practice

The failure isn't always dramatic. Sometimes it's invisible until it isn't.

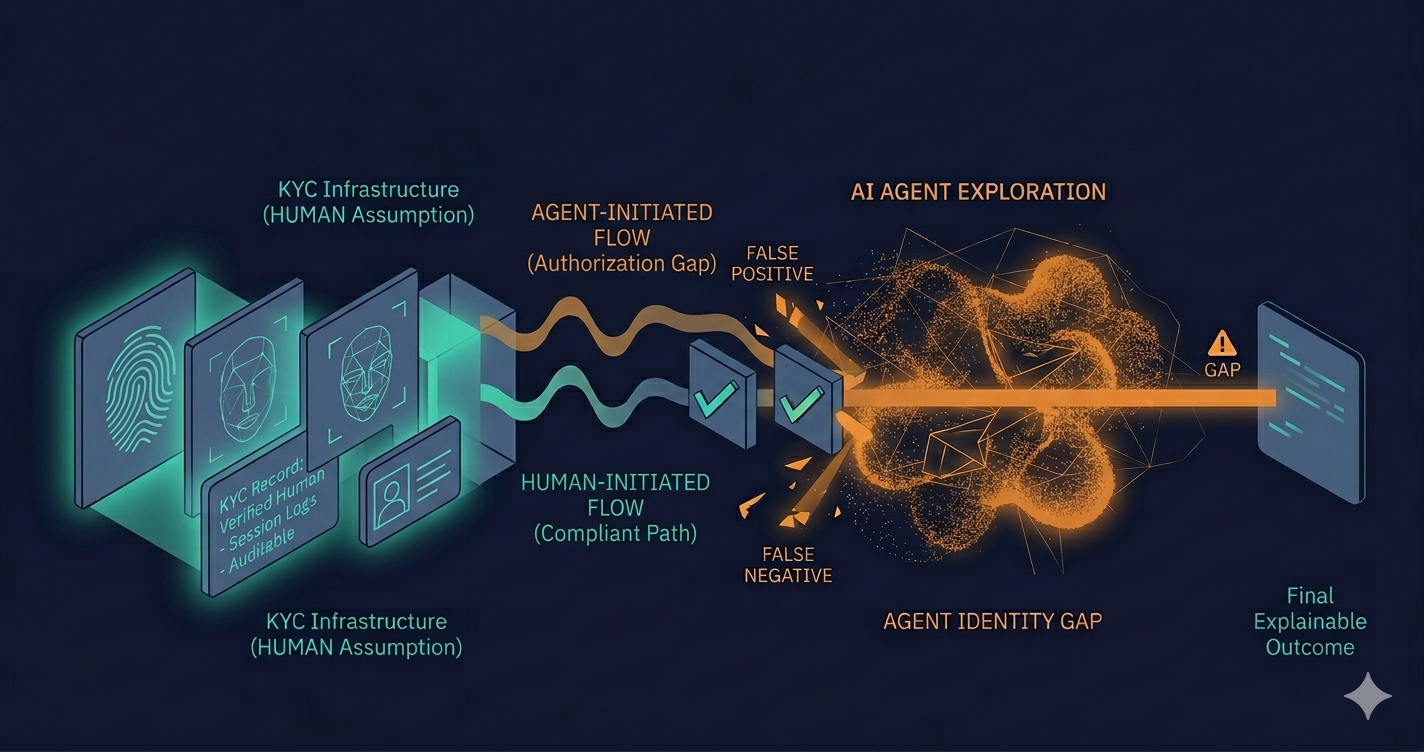

The false positive problem. An AI agent initiates a transaction with behavioral patterns that look nothing like a human user: no hesitation, no session warmup, no device fingerprint that matches a known customer. The fraud model flags it. The transaction is blocked. Nobody designed a remediation path for this scenario because nobody anticipated it.

The false negative problem. The agent has been whitelisted somewhere upstream to avoid constant friction. The KYC checks are bypassed or reduced. The audit trail shows a transaction that cleared without meaningful identity verification. The regulator asks why.

The audit trail problem. Even when the transaction completes correctly, the KYC record was designed to document a human identity verification event. It captures name, document type, verification result. It doesn't capture what kind of actor initiated the process, under what authorization, or whether that authorization was itself auditable. The artifact exists. It just doesn't answer the questions a regulator is now positioned to ask.

These aren't edge cases in a distant future. Teams building AI in production inside regulated fintech environments are encountering versions of this today.

Who Owns This Problem

Here is the honest answer: nobody does, and that's the actual problem.

The team that built the KYC stack wasn't thinking about AI agents. The team deploying the AI agents wasn't thinking about KYC architecture. Compliance was consulted on the AI use case at a high level, but the interaction between the agent's authorization model and the identity verification layer never made it onto anyone's checklist.

This is the same ownership gap that appears in every compliance failure in fintech, just wearing different clothes. It showed up when UI decisions started carrying legal weight without anyone owning the intersection between engineering and regulatory requirements. It shows up here when two separate technical decisions, made by two separate teams, create a compliance exposure that neither team can see from their position.

The difference is that with UI compliance, the failure is visible after the fact. With AI agent identity, the failure might be invisible until an audit surfaces it, or until a bad actor figures out the gap before the engineering team does.

What Needs to Change

There is no established framework for this yet. That's not a gap in this article; it's the actual state of the industry.

What is clear is that the architectural question needs to be asked explicitly: how does your system distinguish between a human-initiated transaction and an agent-initiated one, and what does that distinction mean for your KYC requirements, your fraud controls, and your audit trail?

Some teams are building agent identity layers: explicit authorization models that sit above the KYC stack and define what an AI agent is allowed to initiate, under what conditions, with what human oversight attached. This is close to the LLM as analysis layer, deterministic engine as decision authority pattern that regulated teams converge on when they start taking AI in production seriously. The agent proposes. A versioned, auditable policy layer decides. The KYC stack records both.

The teams that build this before they need it will be in a different position than the teams that discover they need it during an exam.

The question worth asking now: does your current KYC architecture have an answer for what happens when the actor isn't human?